Oscar (AI Assistant)

Prompting Guide

Practical prompt examples and techniques to get the most useful answers from Oscar.

Use this guide to write clearer prompts, understand what kinds of questions work best, and refine results when the first answer is too broad or too narrow.

Prompt Types

Simple Prompts

Single factual checks. Best for quick lookups.

Filtered Prompts

Questions with time windows, project scope, or attribute filters.

Cross-Entity Prompts

Decision-oriented questions that span requests, allocations, and vehicles.

Follow-Up Prompts

Refine or build on a previous answer within the same conversation.

Discovery Prompts

Explore specs, attributes, or fleet composition.

Statistical Prompts

Aggregate counts, percentages, and summaries.

Help Prompts

Ask how a Fleetwise feature or workflow works.

Examples by Type

Simple

| Prompt | What You Get |

|---|---|

| "How many vehicles are currently available?" | Count of vehicles with no active allocation |

| "Which vehicles are in maturity level 3?" | Filtered list by maturity attribute |

| "What is the status of request #4521?" | Current state and linked allocation |

Filtered

| Prompt | What You Get |

|---|---|

| "Which vehicles are free from May 15 to May 22 for project Atlas?" | Availability filtered by date and project |

| "Are there any ADAS High vehicles available in Germany next week?" | Combined spec, location, and date filter |

| "Show all requests submitted in the last 7 days" | Time-bounded request list |

Cross-Entity

| Prompt | What You Get |

|---|---|

| "Can I extend the allocation for vehicle 2334 by 3 days?" | Availability check for the extension window |

| "Show my current allocations and any conflicts by end date" | Allocation list with clash analysis |

| "Which requests are at risk of not being fulfilled this month?" | Requests without matching available vehicles |

Follow-Up

| Prompt | What You Get |

|---|---|

| "Can I extend that one?" | Follows context from previous response |

| "What is the next best option?" | Alternates from the last recommendation |

| "Filter those results to Germany only" | Narrows the previous result set |

Discovery

| Prompt | What You Get |

|---|---|

| "Which paint colour variants exist in this project?" | Attribute value listing |

| "Which vehicles support the V8 engine variant?" | Spec-based vehicle filter |

| "What spec code families are configured?" | Project spec configuration summary |

Statistical

| Prompt | What You Get |

|---|---|

| "What percentage of our fleet is allocated today?" | Allocation utilisation metric |

| "Which team has the highest active allocation count?" | Team-level comparison |

| "How many requests were completed last month?" | Completion count |

Help

| Prompt | What You Get |

|---|---|

| "How do I submit a vehicle request?" | Workflow explanation with steps |

| "How does allocation status change from tentative to active?" | State transition explanation |

| "What is the difference between essential and preferred requirements?" | Concept comparison |

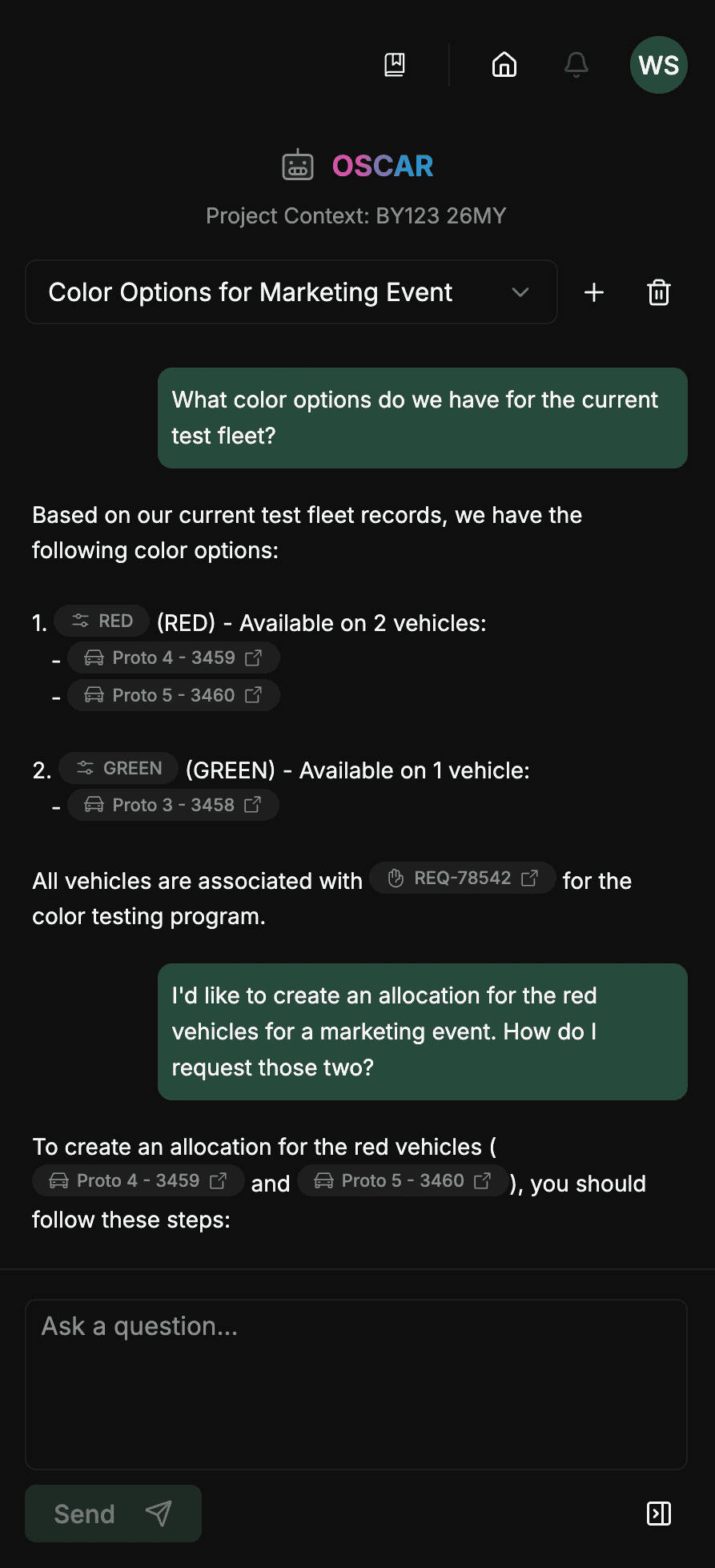

Here's an example of a conversation with Oscar:

Tips for Better Results

- Be specific about project, dates, and requirements.

- Use natural language — strict command syntax is not required.

- Ask follow-ups to narrow a broad result set.

- Add constraints such as location, time window, or attributes if the first result is too broad.

- Treat Oscar as decision support — validate critical actions in the UI.

Current Limitations

- Oscar cannot make changes in Fleetwise (submit requests, confirm allocations, etc.).

- Answers are based on current data — Oscar does not predict future availability changes.

- Very complex multi-step questions may need to be broken into simpler prompts.

- Oscar's knowledge of platform features reflects the current release.